How to use LLMs for your SaMD regulatory documentation

Generative AI is a powerful technology. When wielded correctly, Large Language Models (LLMs) can support the drafting of regulatory documentation for submissions to a Conformity Assessment Body (CAB). However, to harness the technology's power, it pays to be aware of its strengths and limitations. Here's how to use it appropriately for SaMD and AI medical devices.

When developing medical software, the manufacturer must evidence their Quality Management System (QMS) and the devices’ development, safety, and performance through comprehensive documentation. It can be an arduous task to compile and manage this documentation across multiple teams, to craft it in a format that a Conformity Assessment Body expects, and then to tell a cohesive, comprehensive narrative without contradictions or gaps.

As a technology, LLMs are well placed to support this type of complex documentation task. An LLM can generate detailed text output with clear narratives and specified formats. It can act as a document reviewer to increase cohesion and spot inconsistencies across documentation. And, if provided with an accurate regulatory context, it can also audit your documentation for potential regulatory missteps before you submit.

It’s quite tempting to lean on this technology to help generate your regulatory documentation, and there’s no reason you should shy away from this. Scarlet encourages the use of technologies and tools to improve the accuracy and speed with which you produce your submission documentation, provided they are used correctly.

Here is our practical guidance on using generative AI effectively to avoid issues and delays during your assessment.

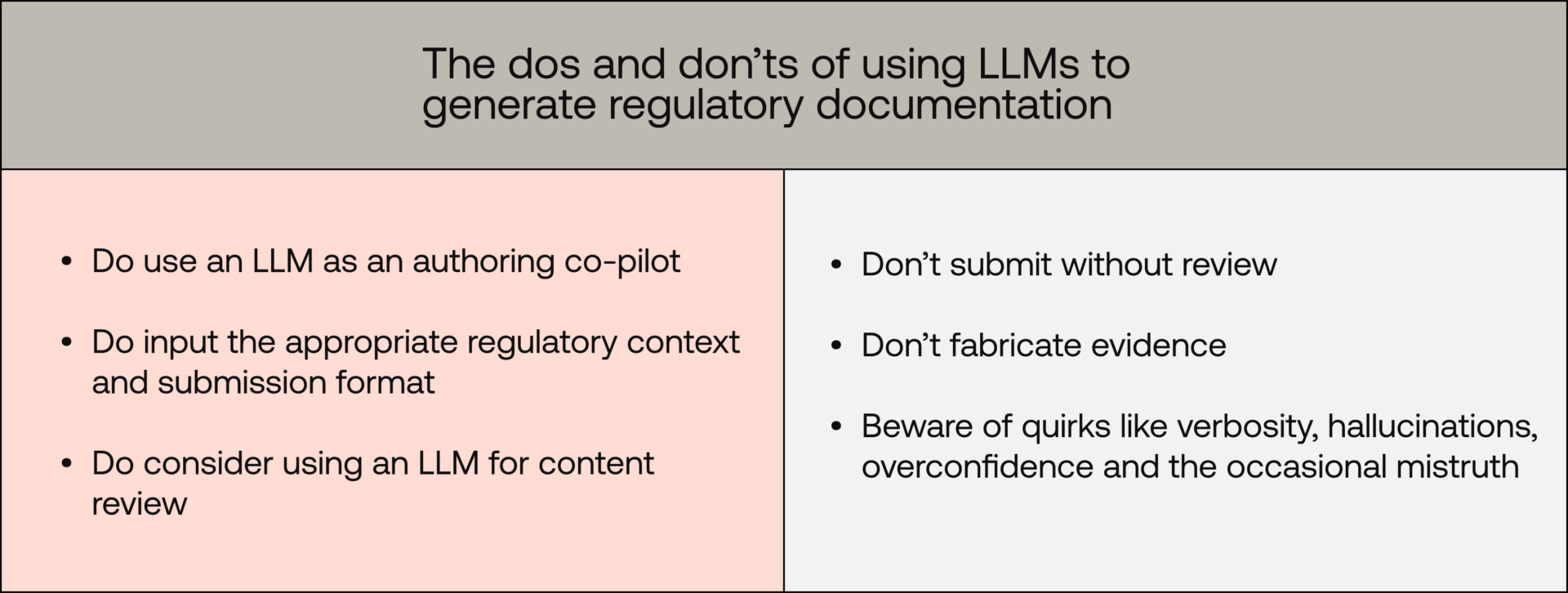

The dos

Use an LLM as an authoring co-pilot

When creating medical device documentation, the LLM is best used as a co-pilot for authoring. As the medical device manufacturer, it is your role to document your organisation’s QMS and the regulatory activities related to your medical devices’ development. This is factual, real-world data specific to you, which a trained LLM has no knowledge of. So the LLM cannot write this for you; it can only assist.

For each specific document or content topic, it is wise to draft factual information in an unpolished format and provide it as context to your LLM. The LLM can then help structure your content, build the narrative, and help you iterate towards a polished, submittable document.

Feed the LLM the appropriate regulatory context

LLMs work best when provided with the appropriate supporting context. When creating medical device documentation, your goal is to demonstrate that you are compliant with medical device regulations, standards, and best-practice guidance. You should ensure that this objective is stipulated in your prompts.

For example, when drafting your risk-management plan for an EU MDR submission, ensure your LLM is familiar with the relevant regulations, including the EU MDR, ISO 14971:2019, and ISO TR 24971:2020. Then, ensure these are included as supporting context in your prompt, along with a clear objective.

This approach helps to ground the LLM's internal chain of thought in producing an output that demonstrates your compliance with the relevant clauses of these regulations and standards.

Feed the LLM the preferred submission formats

Many Conformity Assessment Bodies (Scarlet included) offer preferred submission formats. This information can provide:

- A skeleton of the individual documents or key topic area to cover

- Expected sections or content within each document/topic area

- Recommended file formats for different data types (e.g. narrative content, tabular data, and traceability matrices)

Providing the preferred submission formats to the LLM as part of your content can be very valuable. LLMs are adept at transforming information from one format to another. If you provide an LLM with factual, unpolished information and supplement this with the preferred submission format, it can produce an output that retains the information while structuring it in line with the desired output.

Use an LLM for content review

As well as their use as co-pilot authors, LLMs can also be applied to content review. Medical device documentation can be large and highly interrelated. With many contributors working on the shared documentation set, it is very easy for inconsistencies and gaps to develop.

Common review prompts, used across teams, can help achieve uniform styling and prove very useful when checking consistency and completeness across multiple documents. This can usually unearth the following kinds of issues:

- Missing document references: For example, have you referenced a document but not included it in the submission set?

- Missing work: For example, have you forgotten to include the execution reports for some planned regulatory activities?

- Internal consistency: For example, do the outputs of your risk management, clinical evaluation, and validation activities coalesce into a common narrative?

Similarly, it can be helpful to ask the LLM to adopt the role of a medical device expert and audit your documentation for compliance gaps. Once it is provided with the relevant medical device standards and related domain knowledge, it can spot areas of documentation where your evidence is weak, or even non compliant.

It's worth noting that an LLM review should never be a full substitute for human oversight, but it is another useful weapon in your arsenal when checking for compliance issues before submission.

The don’ts

Don't submit without review

Under ISO 13485, your documentation must be owned, reviewed, and approved by competent individuals within your organisation who are sufficiently competent and authorised. This is a regulatory requirement that applies regardless of how that documentation was produced.

Using an LLM to co-author or review your submission does not change this obligation.

Beyond compliance, robust human review before submission is sound practice. LLM-induced errors discovered during assessment can result in application rejection, additional findings, and extended certification timelines. Catching these issues internally is less costly than encountering them mid-assessment.

Don't fabricate evidence

This one should be obvious, but it is worth highlighting due to its importance. Your submission documentation must reflect reality. Its purpose is to provide evidence of your QMS and of the regulatory medical-device activities you have conducted, including design and development, usability testing, clinical evaluation, and risk management.

Do not use LLMs to fabricate evidence of procedures that are not followed, nor of regulatory activities that were not conducted. This is tantamount to fraud and will undoubtedly be uncovered through the assessment procedures. Penalties for such transgressions can be very severe, and this scenario is best avoided.

The cautions

While there are clear use cases for LLMs in generating regulatory documentation (and things to steer well clear of), there are a few quirks of the tool to look out for should you choose to go down this path.

Hallucinations, overconfidence, and lies

Anyone who has used LLMs will have experienced this problem. On occasion, the LLM's output is not on point; it hallucinates a mistruth and delivers it overconfidently. On other occasions, these mistruths are so bad as to be considered clear lies.

As you ask the LLM to do more complex tasks, such as restructuring to fit a preferred format, checking for internal consistency across your documents, or auditing for compliance issues, the chances that it begins to diverge from the truth increase significantly.

Always review and scrutinise the LLM's output to ensure the reality of your medical device development is preserved.

Verbosity

Submission documentation is multifaceted, multidisciplinary, and must comprehensively cover your QMS and various regulatory medical-device-development activities. The document set is naturally quite large.

To help ensure efficient review of large submission documentation, assessors prefer content that is informative, descriptive, justified, and yet concise.

It is this last characteristic that LLMs can struggle with, as LLM output tends to be verbose. If you are using LLMs extensively when building your documentation, you may find that the volume of content grows exponentially. It is wise to prompt the LLM to produce content that is informative, descriptive, well supported, and concise, and to rerun it until it reaches an acceptable balance. This will make the assessment team’s reviews, and your own internal reviews, simpler.

Traceability

LLMs work very well with natural language. When co-authoring, LLMs are great at understanding natural-language context and prompts, and at producing natural-language outputs. However, not all medical device documentation exists in this format.

Traceability in technical documentation is one such example. The medical device regulations require you to provide clear traceability through risk management and software development records. For example, it is common for manufacturers to produce traceability matrices to show the relationship between:

- Risk records: Hazards -> hazardous situations -> harms -> estimation -> evaluation -> control -> residual risk acceptance

- Software records: Use requirements -> software requirements -> software design components -> unit tests -> integration tests -> software-requirements verification tests -> validation tests

These traceability matrices are commonly provided in tabular format and consist of hundreds of individual, interrelated records. As humans, we are adept at making sense of tabular structures. However, the volume of interrelated information these tables inherently capture may be overwhelming for an LLM to properly comprehend and reason with.

Managing such complex traceability is an area where human oversight is best retained, at least until generative AI technology advances further.

In summary

Generative AI offers genuine value in preparing medical device regulatory submissions, but only when used thoughtfully. If deployed as a co-author and reviewer, an LLM can meaningfully reduce the burden of documentation. However, their tendency to hallucinate, produce verbose output, and struggle with complex traceability structures means human oversight remains non-negotiable.

If used well, with appropriate regulatory context, preferred submission formats, and rigorous human review, LLMs can accelerate your path to certification. If used poorly, they can introduce errors, inconsistencies, and, in the worst case, fraudulent content that can jeopardise your submission entirely.

Ultimately, the responsibility for the documentation lies with the manufacturer and not the LLM, but using this technology to support that process is entirely possible and can prove hugely valuable.

Want Scarlet news in your inbox?

Sign up to receive updates from Scarlet, including our newsletter containing blog posts, sent straight to you by email.