Call my agent – What you need to know about getting agentic AI medical devices certified

Agentic AI will allow medical devices to take actions that have historically been the responsibility of clinicians. What are the regulatory considerations for manufacturers of medical devices that are becoming increasingly agentic?

The most common Software as a Medical Device (SaMD) products are designed to inform clinicians and human decision making. This has historically taken the form of purpose-built tools that analyse data, detect patterns, and present outputs that are then used to support professional clinical judgement. It is then up to clinicians to review, interpret, and act on this data, making sense of this broadened range of data sources to make effective and accurate clinical decisions.

With the advent of AI as a Medical Device (AIaMD), novel approaches to these traditional SaMD applications have been introduced, but always within predefined scopes and functional constraints.

Agentic AI now promises to take that one step further, allowing the development of medical device software that can adapt to a much broader range of inputs, shifting priorities, and – with the right safeguards in place – take actions typically reserved for clinicians.

This blog post explores the continuum of agency in AI medical devices, the regulatory implications of increasing autonomy, and the practical considerations for manufacturers developing and governing agentic systems.

What are agentic systems?

When does a system become 'an agent'? An AI system is considered 'agentic' when it can autonomously accomplish goals over time, adapting to shifting input and/or feedback - in contrast to relying on individual prompts or lacking in persistent memory. Key behavioural aspects of agentic AI include:

- Goal-oriented behaviour (as opposed to task-oriented)

- Persistent memory and planning

- Ability to leverage other tools (including other AI tooling)

- Ability to adapt to dynamic input and feedback

- Requires limited human intervention

A traditional AIaMD might evaluate electronic health record (EHR) data and output a sepsis risk score, flagging an alert when a patient is identified as high risk. Here the output is informational; there is no direct modification to the workflow or automatic escalation. The clinician must review the information and act.

A similar device with agentic AI might take in these same inputs, but have more agency in adapting the workflow. It might re-order patient triage priority, automatically page a rapid-response team, and initiate a sepsis protocol checklist.

Other agentic medical devices could synthesise findings across a broad context, including radiography results, laboratory tests, and electronic medical record data to assign patients to pre-defined diagnostic or treatment pathways, automatically escalating cases that need it.

In more advanced configurations, a coordinated set of specialised AI agents could conduct an open-ended patient history, document findings directly into the medical record, generate and prioritise differential diagnoses, and pre-structure evaluations or treatment plans based on the differentials.

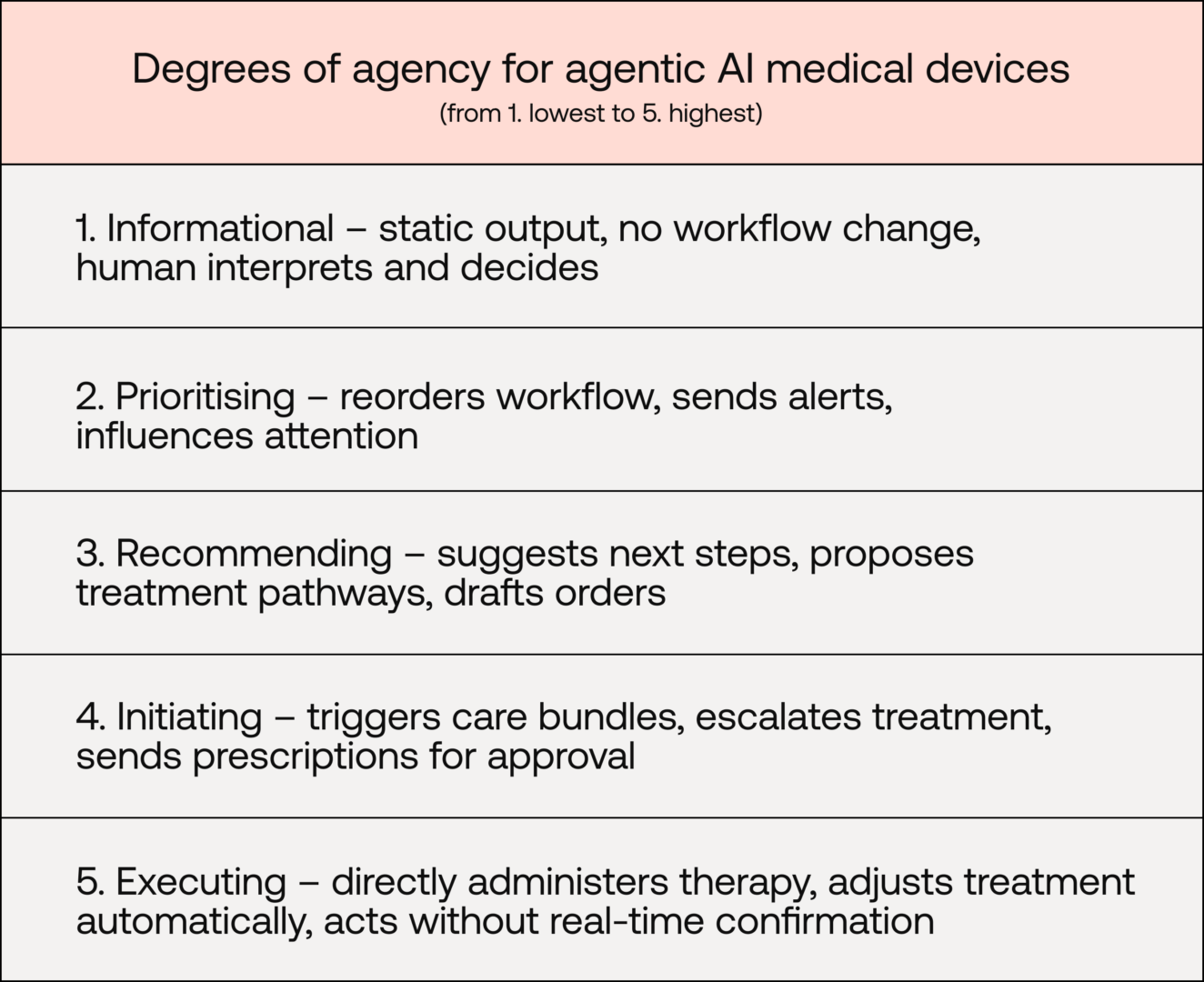

A continuum of agency

There is a continuum of agency at play here. Consider these varying levels.

- No/low-agency devices – Have static output and don't change workflows. Agentic devices might have more dynamic ability to assemble context, but a human still needs to interpret, decide, and act

- In the middle – Devices might prioritise cases, influence attention through alerts, or recommend and facilitate next steps

- At the higher end of agency – devices initiate next steps independently and execute treatments

To flesh this out further, let's consider how five levels of agency for medical devices might look:

Note: Under EU MDR Annex VIII, rule 11 governs the classification of SaMD based on the significance of the information it provides. It groups devices into those that "provide information" or those that "monitor physiological processes".

This continuum is crucial and it dictates how manufacturers might alter their regulatory approach.

Autonomy and human oversight

The World Health Organization's key ethical principles for AI for health say human autonomy should not be undermined. While use of agentic AI will move some decision-making power, the EU AI Act requires that high-risk AI systems are designed so natural persons can:

- Correctly interpret output

- Understand the capacities and limitations of the system

- Monitor its operation, including detecting and addressing unexpected performance

- Remain aware of automation bias

- Intervene, override, or stop the system

Under EU MDR, autonomy and human oversight aren't directly addressed outside of closed-loop systems for active therapeutic devices. Clinical impact is regulated as a risk-based process where increasing autonomy would increase risk classification, clinical-evidence burden, and software safety classification.

Regulatory compliance in practice

The evidence and conformity-assessment process required for agentic AI medical devices is not dissimilar from other SaMD, however, with increasing autonomy comes increased risk and thus increased evidence burden and scrutiny. This is compounded for devices with broad intended purposes.

While the regulatory landscape around AI is evolving, manufacturers should include:

Clear intended purpose matched to clinical evaluation and risk

- Regulatory shift: Increasing autonomy increases significance of device actions

- Implication: Increased need for precise intended purpose aligned with device capabilities

- Required evidence: Clinical evaluation must support specific claims and address not only model accuracy, but the safety and appropriateness of downstream actions within real-world workflows

Robust and proactive software verification and validation practices

- Regulatory shift: Novel areas of concern, including goal alignment with intended use, autonomous actions, secure external integrations (SOUP) and shutdown mechanisms

- Implication: Robust and proactive software verification and validation processes are needed to support safety and security

- Required evidence: Established processes safeguarding data integrity, model behavioural drift, and risk-control mechanisms

Robust risk management and focus on patient safety

- Regulatory shift: Shortened causal chain between output and clinical consequence, shifts hazard analysis from incorrect information to inappropriate action

- Implication: Increased autonomy reduces reliance on clinician vigilance as a risk control

- Required evidence: Risk reduction must be demonstrable by design (e.g. bounded autonomy, tiered escalation, validated overrides). Implementation and effectiveness of controls must be evidenced in line with EU MDR Annex I and ISO 14971

Lifecycle monitoring and leverage of real-world data

- Regulatory shift: Agentic systems may have evolving behaviour over time, affecting technical performance and clinical workflows

- Implication: Monitoring should extend beyond technical performance to human–AI interaction and usage patterns

- Required evidence: Manufacturers must demonstrate structured PMS and PMCF processes that actively detect performance and behavioural drift and analyse real-world data trends

Updates and continuous learning

A defining feature of many agentic AI systems is adaptivity. Systems update models, integrate new data, and adjust decision logic over time. While this kind of self-learning may improve performance, it also impacts the regulatory assumption that the device assessed and certified is the same as the device on the market.

Substantial changes to medical devices require re-assessment. Whether through explicit manufacturer-initiated changes or self-imposed retraining, threshold adjustment, prompt modification, or data expansion, manufacturers must ensure that the device remains within the originally assessed scope.

Manufacturers should define in advance what changes will be made to a device post-market, how the changes will be developed, validated, implemented, and monitored. Predetermined change control plans (PCCPs) are one way manufacturers can pre-specify device changes and have them approved by their Notified Body.

Conclusion

Agentic AI shifts medical device software along the continuum of agency: from informing clinical judgement to influencing, initiating, and, in some cases, executing elements of care.

For manufacturers, this does not bring the devices outside of existing regulatory frameworks, it in fact heightens their application. As autonomy increases, so too does the device's risk profile, the depth of clinical evaluation required, and the level of scrutiny applied during regulatory assessment.

Precision in intended purpose, alignment between claims and evidence, and careful consideration of downstream clinical impact are all key.

In practice, successful regulatory approaches for agentic systems will depend on disciplined software lifecycle development. Robust software verification and validation, clear version control and change-management processes, defined safeguards around autonomous behaviour, and strong post-market surveillance are also all essential.

Early and transparent engagement with your Notified Body, grounded in risk management and patient safety, will support the responsible development of systems whose autonomy is clinically justified, technically controlled, and continuously monitored. This means more and better cutting-edge agentic systems will be able to reach the patients who need them.

Want Scarlet news in your inbox?

Sign up to receive updates from Scarlet, including our newsletter containing blog posts, sent straight to you by email.