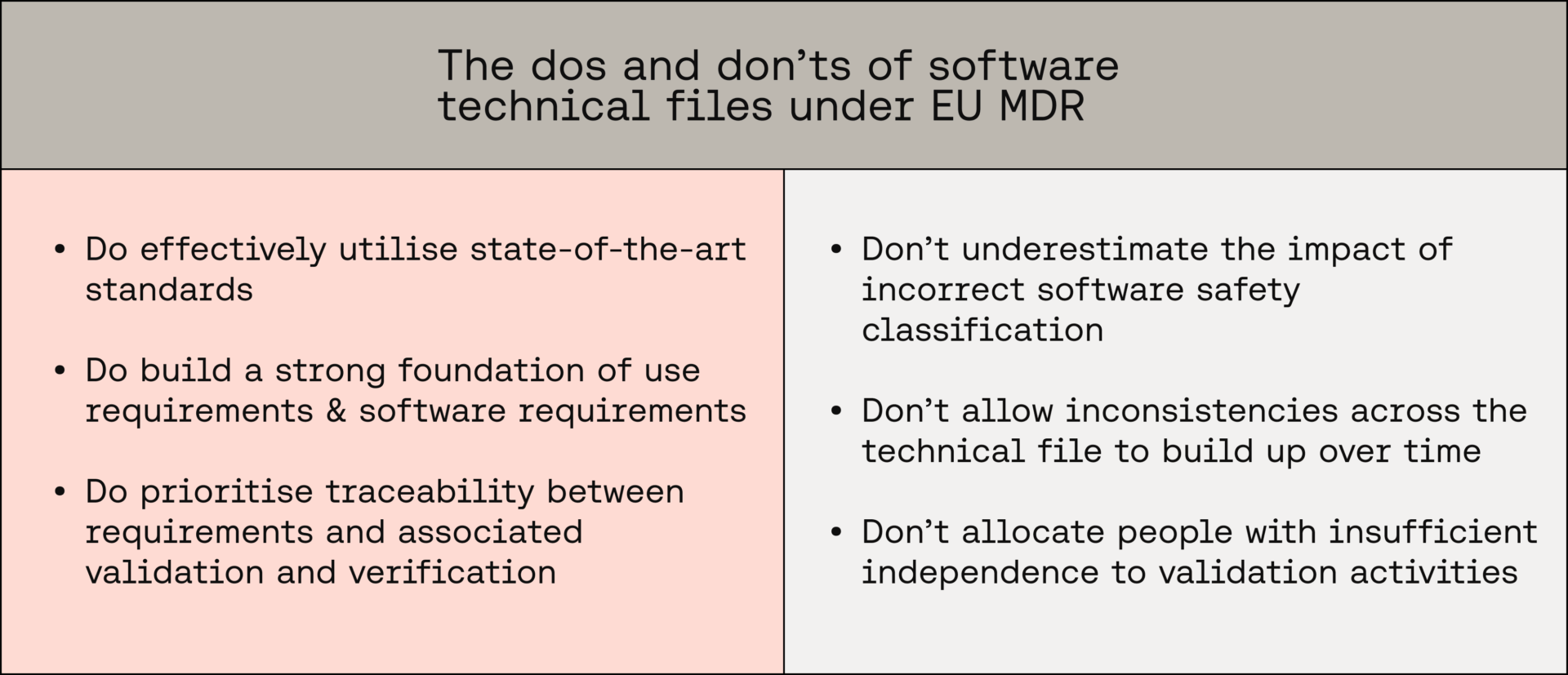

The pillars & pitfalls of the software technical file under EU MDR

In this post we set out the pillars: the essential practices that keep your technical file credible and submission ready. We also set out the pitfalls: the common traps that create gaps, contradictions, and certification delays.

You will learn how clear requirements, sound software safety classification, appropriate validation-and-verification strategy, and disciplined consistency controls form the backbone, and which missteps most often trip teams up.

Pillar 1 - Utilise SotA standards

EU MDR 2017/745 Annex II Clause 4.c dictates that manufacturers must include "the harmonised standards, common specifications or other solutions applied" in their demonstration of conformity.

More specifically to software, Annex I Chapter 1 clause 17.2 states the following:

"For devices that incorporate software or for software that are devices in themselves, the software shall be developed and manufactured in accordance with the state of the art taking into account the principles of development life cycle, risk management, including information security, verification and validation."

Though the specific standards utilised during SaMD development cannot be mandated by a Notified Body, it's incumbent on the manufacturer to justifiably evidence that the "state of the art" (SotA) has been considered. Luckily, there is a clear list of industry-recognised standards at manufacturers' disposal. Making effective use of these standards lays the foundation for a compliant technical file and smooth certification process.

Key standards to consider:

- IEC 62304: Recognised as state of the art for SaMD software-development life-cycle best practice. It gives manufacturers a risk-based framework that improves traceability, reduces defects, and speeds regulatory acceptance

- IEC 82304-1: The state-of-the-art product-level standard for health software. It complements IEC 62304 by defining a framework for use-level and system requirements, allowing manufacturers to show release readiness and streamline conformity assessment

- IEC 81001-5-1: The state-of-the-art cybersecurity engineering benchmark for health software. It helps manufacturers build secure-by-design products and provide clear evidence of threat mitigation

- IEC 62366-1: The state-of-the-art usability-engineering process for medical technology, and particularly valuable for SaMD interface definition. It reduces use-related risks and facilitates the generation of robust human-factors evidence for safety and performance

- ISO 14971: The state-of-the-art standard for medical-device risk management. It lets manufacturers systematically control hazards with a framework well suited to software risk, demonstrating MDR Annex I compliance across the product life cycle

Pillar 2 - Strong requirements specification

Requirements specification is the core of a strong technical file from a software perspective, having a cascading impact on many other key areas.

Use requirements

Strong requirements begin at the product level with a comprehensive set of use requirements capturing intended purpose, user and system interfaces, security and data-privacy requirements, maintenance and decommission requirements, accompanying document requirements, and applicable regulatory requirements, in line with IEC 82304-1 4.2.

Any exclusions should be explicitly justified to avoid gaps that delay assessment. These high-level requirements frame the scope for development and anchor later validation and verification.

Software requirements

Translate use requirements, system requirements, and identified software risk controls into complete, unique, testable, and traceable software requirements in line with IEC 62304 5.2. As applicable software requirements should cover functional requirements, performance requirements, software risk-control requirements, user-interface requirements, software-interface requirements, data-definition and database requirements, operational and life-cycle requirements, cybersecurity requirements, compliance and regulatory requirements, and any specific AI requirements — justify any non-applicable categories to support efficient review.

Testability

Write each requirement to be clear, concise, and singular. Ensure testability by making each requirement objectively measurable, naturally facilitating objective test cases and evidence. Using defined obligation terms, applying MECE (mutually exclusive, collectively exhaustive) to avoid overlap and omission, and expressing quantitative or state-based criteria all improve alignment, reduce ambiguity, and ease conformity assessment.

For a deep dive into requirements specification, read our full blog posts below:

- Building a comprehensive set of use requirements

- Building a comprehensive set of software requirements

- Why crafting proper requirements is so important for your SaMD (and how to do so)

- How to make SaMD requirements testable

Pillar 3 - Traceable verification & validation

Leveraging a strong set of defined requirements, it's now time to ensure these requirements are comprehensively verified and validated, covering software and use requirements respectively.

Though approached at different levels, both verification and validation allow manufacturers to prove, through the provision of objective evidence, that the software does what it's supposed to do and that the intended purpose has been met.

Verification

Ensure all software units have been implemented correctly, all software items have been integrated successfully, all software requirements can be traced directly to tests that cover them, and of course that all required tests pass.

These tests can take the form of unit tests, integration tests, regression tests, and general software-system tests (IEC 62304 5.7.1 note 1 permits the combining of software system and integration testing).

It's important to note that the requirement-to-test relationship does not need to be 1-1, it's acceptable to cover multiple requirements in a single test. It is however critical that all requirements are clearly covered by their associated tests, atomic requirement definition helps immensely here.

Validation

Ensure all required use requirements have been independently and objectively validated as met, proving the high-level intended use has been accomplished. Validation activities can take many forms, including testing activities such as high-level, end-to-end system tests, and penetration testing, in addition to inspection and analysis activities such as accompanying document analysis and verification test-report analysis.

As with verification, it's pivotal that all use requirements are traceably covered by their associated validation activities, and again this relationship doesn't need to be 1-1. Manufacturers should however avoid grouping use requirements to activities too broadly, and once again solid requirement-definition practices streamline this.

For our detailed breakdown of IEC 82304 software validation, check out the full blog post here.

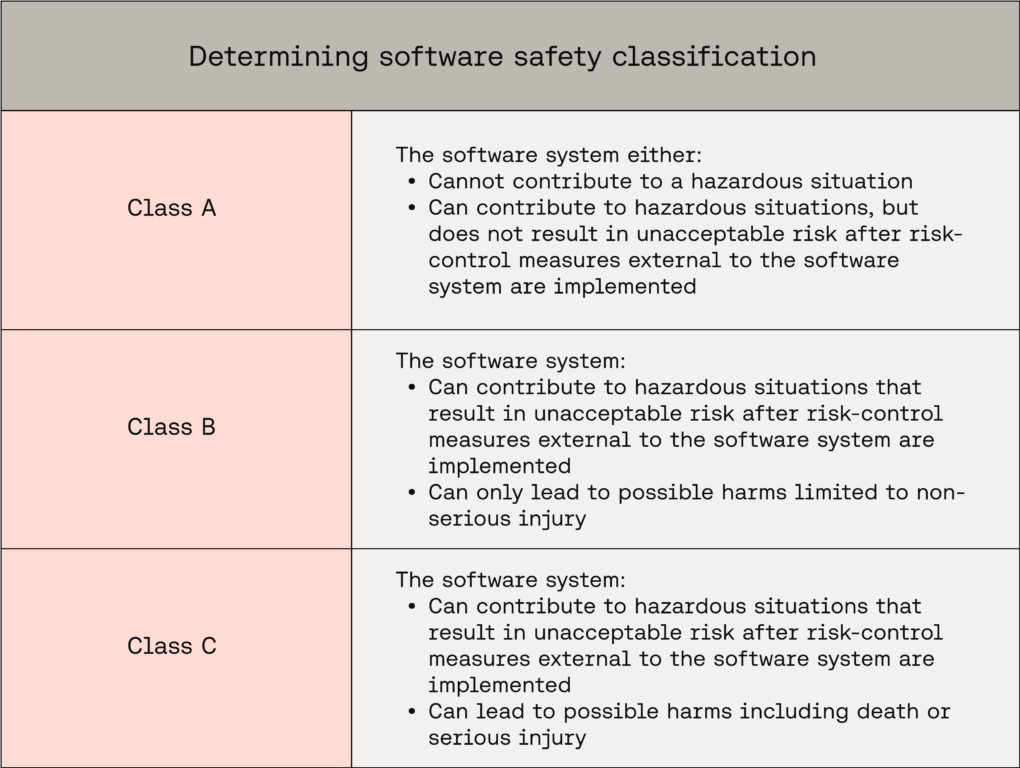

Pitfall 1 - Incorrect software safety classification

Software safety classification drives class-dependent life-cycle activities and documentation required by IEC 62304 across the technical file; it's therefore critical that manufacturers get this right before submission. Equally, software safety classification can be a tricky area for manufacturers to navigate, striking the balance between appropriate designation and over-classification.

A quick breakdown of how classification is correctly determined:

Exact classification rules can be found in IEC 62304 Clause 4.3(a).

Assume Class C by default. Justify a lower class using associated hazardous situations, possible harms, and external risk controls.

The parent system or item sets the classification for its children. Any item inherits the class of its parent, and the software system’s overall class is assigned based on the most severe harm associated with the entire system.

Lower-class children are acceptable when the architecture is carefully decomposed into effectively segregated items, isolating higher-risk functionality and supporting lower classifications for the segregated parts.

Fundamentally, accurate software safety classification requires "worst case" analysis of your software system. Only risk controls external to the software system may be considered to reduce classification. Software failure of the system, items, and any risk controls implemented in the software system must be assumed.

- Overly monolithic software architecture: This can result in software items being unnecessarily classified as the highest associated classification in the system. By decomposing the system into atomic items and segregating risk, overall documentation burden can be reduced significantly

- Underestimating classification: This will result in missing key documentation and software-development activities, prolonging certification and potentially harming device safety. Always ensure all classifications are assigned appropriately relative to all associated hazardous situations and harms

For a crash course in software safety classification, check out our trilogy of blog posts, The ABCs of Software Safety Classification.

Pitfall 2 - Info inconsistencies, quality quibbles

Technical files are an evolving and dynamic corpus, often changing considerably over the course of medical-device development.

However, it is imperative to ensure that consistency and quality are always maintained. Contradictory information and avoidable omissions can negatively impact certification timelines. Common examples include validator independence statements that contradict the validators listed in reports, missing validation-and-verification evidence, non-comprehensive test coverage, and even antithetical requirements, for example security requirements requiring always-offline operation while software requirements demand online functionality.

These mismatches erode credibility, trigger avoidable query rounds and non-conformities, and can severely delay conformity assessment.

Prevent consistency drift by enforcing a single source of truth with rigorous change control. There should be clear traceability between policies, plans, and the resulting evidence produced. Utilise structured reviews that explicitly check cross document consistency. When changes are made, update the impacted artefacts in the same cycle, record the rationale, and capture approvals. These approaches help to preserve coherence, strengthen audit readiness, and keep the technical file submission ready.

Pitfall 3 - Inappropriate validator independence

Evidencing appropriate validator independence is a frequent area of friction for manufacturers, especially those with smaller teams.

The wording of IEC 82304-1 is often a point of confusion, especially regarding what level of independence is required and what a "designer" constitutes in this context. Typically, “designer” encompasses the full development team, technical choices made before and during implementation are design activities, and as such developers are usually designers.

Critically, manufacturers should leverage the flexibility in IEC 82304 Clause 6.1.f and Annex A Clause 6 to avoid overcommitting to a level of independence they cannot achieve.

A strict policy that bars anyone involved in any design from any validation maximises separation but is impractical for small teams.

A pragmatic policy that prevents those who designed a specific software item from validating that same item maintains integrity through a "separate person"-based approach and is feasible for organisations of almost any size.

For a full breakdown of optimal IEC 82304 validation strategy and validator independence, check out our full blog post here.

In conclusion

We’ve explored how to effectively utilise state-of-the-art standards, craft strong use and software requirements, apply IEC 62304 software safety classification wisely, set appropriate validator independence, and keep the technical file coherent through traceability, change control, and rigorous cross checks.

By applying the outlined pillars, and avoiding the discussed pitfalls, manufacturers can build an EU MDR-ready software technical file that is coherent and evidence based, streamlining their certification.

Want Scarlet news in your inbox?

Sign up to receive updates from Scarlet, including our newsletter containing blog posts, sent straight to you by email.